Cut-off: March 31, 2026 — both platforms are moving fast; treat this as a snapshot, not a verdict.

Over the past several projects, our team has deployed MCP servers and multi-agent systems into both AWS and Azure ecosystems. The experience forced us to develop a clear mental model for which platform fits which architecture decision — and the contrast is sharper than most comparison articles admit.

The headline thesis: AWS AgentCore builds agentic infrastructure from the ground up; Azure AI Foundry builds the agent experience from the top down. Both philosophies are internally consistent, both have real merit, and choosing the wrong one for your context has real cost.

The Architecture Philosophies Aren't Just Marketing

AWS AgentCore — Infrastructure First

Amazon launched AgentCore in preview in July 2025 at AWS Summit New York, reached general availability in October 2025. The design reflects classic AWS product thinking: give builders composable, independently scalable primitives and let them wire it together.

The core component surface looks like this:

AgentCore Runtime — containerized execution for agents and MCP servers. You write your server, run

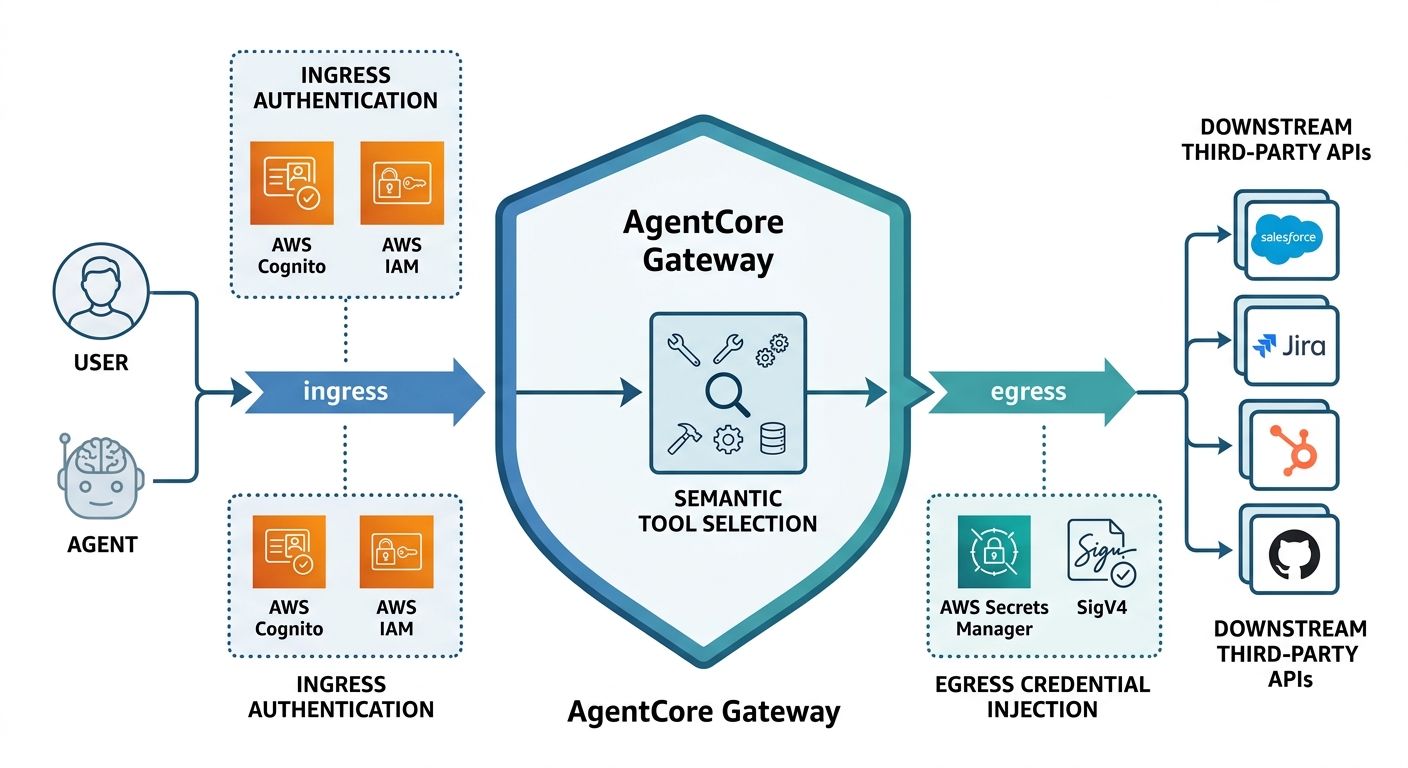

agentcore configureandagentcore launch, and the toolkit handles Dockerfile generation, IAM role creation, ECR push, and runtime provisioning. The resulting deployment ARN is your endpoint:arn:aws:bedrock-agentcore:{region}:{account}:runtime/{id}. Two commands from local dev to cloud. No YAML manifests, no K8s context switching.AgentCore Gateway — this is the piece we've gotten the most mileage out of. It converts your Lambda functions, OpenAPI specs, and Smithy models into MCP-compatible tool endpoints. The key technical differentiator AWS explicitly calls out: it is the only fully managed service that handles both ingress authentication and egress authentication in a single service boundary. Ingress covers who can call your tools; egress covers how your agent calls downstream APIs with credential injection. Semantic tool selection means your agent can search across thousands of registered tools at runtime without bloating the context window.

A technical architecture diagram showing the AgentCore Gateway as a central boundary; it demonstrates ingress authentication from a user or agent (via Cognito/IAM) and egress credential injection for calling downstream third-party APIs after semantic tool selection. AgentCore Identity — OAuth 2.0, AWS SigV4, Cognito user pools, machine-to-machine (M2M) credential flows, and user-delegated access. Starting October 2025, the service uses a Service-Linked Role for workload identity. Our pattern for MCP server authentication: Cognito user pool + Authorization Code flow (3LO) for user-facing tools, M2M client credentials for inter-agent calls.

A2A Protocol (Runtime GA) — AgentCore Runtime supports the Agent-to-Agent protocol with both SigV4 and OAuth 2.0 for inbound auth. In practice this means you can run a LangGraph agent, a Strands agent, and a Google ADK agent on the same runtime, have them communicate via A2A with proper auth, and observe all of it through CloudWatch. We've validated cross-framework A2A: a Google ADK host agent running on AgentCore calling a Strands-based specialist agent on the same platform — it works.

Additional managed services: AgentCore Memory (event-driven persistence + vector store), AgentCore Browser (auto-scaling managed Chromium sessions, zero to hundreds), AgentCore Code Interpreter (isolated multi-language sandbox), and AgentCore Observability (trajectory inspection, custom scoring, token/cost dashboards via CloudWatch and OpenTelemetry).

Framework stance: AgentCore is deliberately framework-agnostic. LangChain, LangGraph, CrewAI, Strands Agents (AWS's own open-source framework), and even Google ADK all work on the runtime. You bring your orchestration logic; AgentCore supplies the production-grade plumbing.

Azure AI Foundry — Experience First

Microsoft's trajectory is different. Azure AI Foundry Agent Service reached GA on June 16, 2025 at Build 2025. The product decision-making visibly flows from developer experience and the Microsoft ecosystem downward into infrastructure.

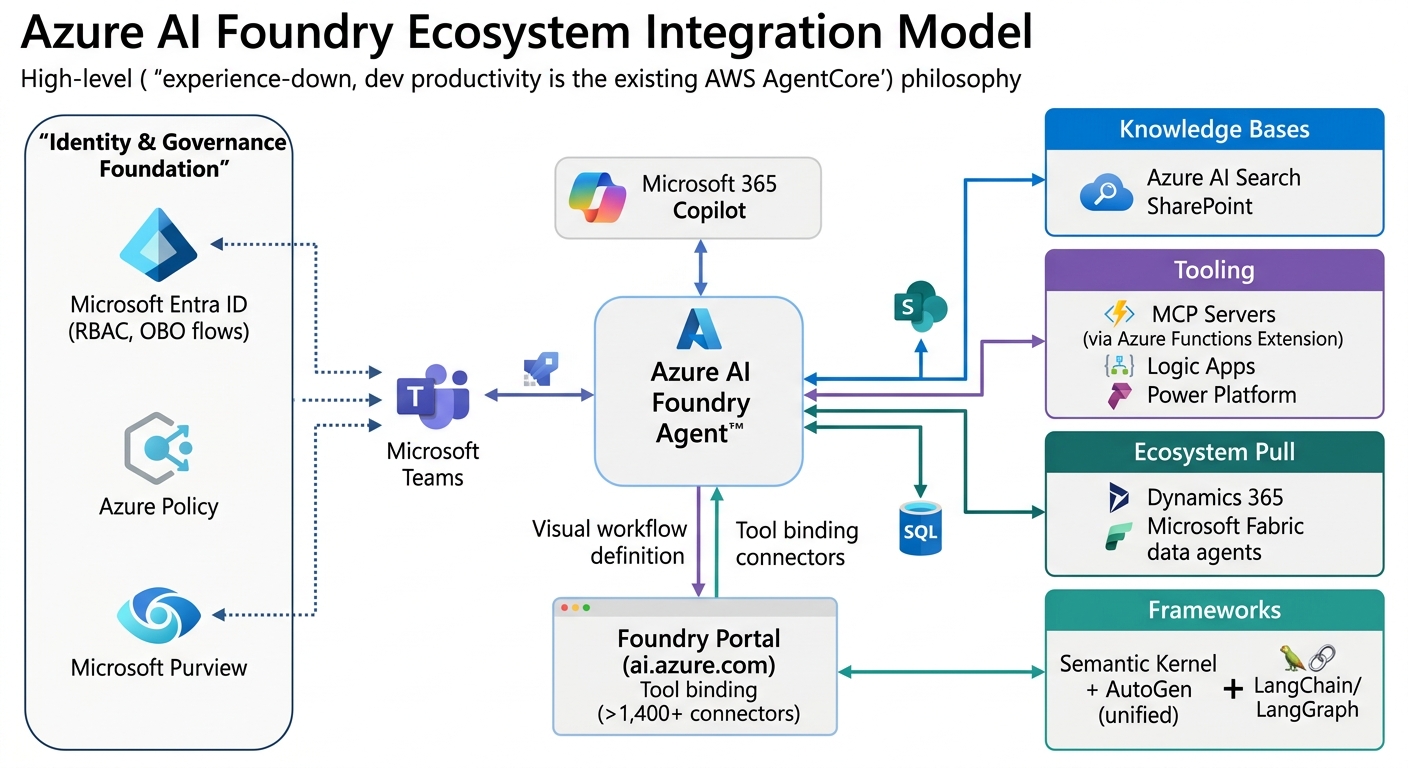

The most representative feature of this philosophy is the new Foundry portal at ai.azure.com: a visual workflow builder where you define agents, attach knowledge bases, wire in Azure AI Search or SharePoint indexes, and connect A2A endpoints — all in a GUI. The Tools tab surfaces over 1,400 business system connectors. This is very traditional Microsoft: meet the builder where they are, make everything available inside the Microsoft ecosystem, and provide a polished wizard path.

Key technical components:

Foundry Agent Service — GA as of June 2025. Agent definitions live as versioned resources in Foundry projects. The SDK (

azure-ai-projects) gives youcreate_version, tool binding, and run management. For production deployments, the SDK + REST API path is the recommended route; the Agents Playground in the portal is still primarily for prototyping.MCP Server Hosting — Azure went a different route than the monolithic runtime model. Two options as of early 2026:

- Azure Functions MCP Extension (broadly available January 2026): Supports .NET, Java, JavaScript, Python, TypeScript. Built-in Entra/OAuth auth, Protected Resource Metadata hosting, on-behalf-of (OBO) flows. A self-hosted option lets you deploy existing MCP SDK servers without code changes. Integrates directly with Foundry Agent Service for tool discovery.

- Foundry MCP Server (Preview, live December 3, 2025): Cloud-hosted managed endpoint at

mcp.ai.azure.com. Zero local process management. Entra auth built in. Connect from VS Code, Visual Studio 2026, or the Foundry portal. This is MCP-as-a-surface-for-Foundry-itself — it exposes Foundry capabilities (model catalog, knowledge bases, evaluations) to agents via MCP, not a general-purpose MCP hosting runtime.

A2A Tool (Preview, December 2025) — Foundry agents can call any A2A-protocol endpoint as a first-class tool. Auth options: API key, OAuth2, or Entra Agent Identity. The semantic distinction Microsoft draws is meaningful for system design: the A2A tool means Agent A calls Agent B and B's response returns to A, with A maintaining thread ownership. A multi-agent workflow is full thread handoff. Both patterns are supported in the portal builder.

Microsoft Agent Framework (Public Preview, October 2025) — Consolidation of Semantic Kernel and AutoGen into a single commercial-grade SDK. MCP + A2A protocol support. This is how Microsoft unified their previously fragmented framework story.

Ecosystem integrations: Entra ID (RBAC + identity), Azure Policy and Purview (governance), Teams/M365/SharePoint (workflow surfaces), Logic Apps, Power Platform, GitHub Copilot, Azure Arc (hybrid/edge). If your organization runs on Microsoft, agents become extensions of the tools employees already use.

Feature Comparison Table

| AWS AgentCore | Azure AI Foundry | |

|---|---|---|

| Philosophy | Infrastructure-up, ops-focused | Experience-down, dev productivity |

| MCP Server Hosting | GA — Runtime, containerized, 2 CLI commands | Azure Functions (GA Jan 2026) + Foundry MCP Server (Preview) |

| Agent Hosting | GA (Runtime) | Agent Service GA; hosted agent deployment maturing |

| A2A Protocol | GA — SigV4 + OAuth 2.0 | Preview (Dec 2025) — key/OAuth/Entra Agent Identity |

| Gateway | AgentCore Gateway (GA) — ingress + egress auth, semantic tool discovery | Azure API Management + Functions (no unified dedicated gateway surface) |

| Authentication | Cognito, SigV4, OAuth, M2M | Entra ID, OAuth, Entra Agent Identity, OBO flows |

| Memory | Managed service (vector + event store) | Preview — memory in Foundry Agent Service |

| Browser Automation | AgentCore Browser — serverless, auto-scale 0→hundreds | Computer Use tool (Preview) |

| Code Interpreter | AgentCore Code Interpreter (GA) | Code Interpreter tool (GA) |

| Observability | CloudWatch, OpenTelemetry, trajectory inspection, custom scoring | Agent Monitoring Dashboard, Azure Monitor |

| Framework | Agnostic: Strands, LangChain, LangGraph, CrewAI, Google ADK | Semantic Kernel + AutoGen unified, LangChain, LangGraph |

| Model Choice | Multi-model: Claude, Llama, Mistral, Titan, etc. | Strong OpenAI/GPT exclusives + growing catalog |

| Enterprise Infra | VPC isolation, PrivateLink, CloudFormation, ECR | Azure Policy, RBAC, Arc (hybrid), Purview |

| Ecosystem Pull | AWS IAM, Lambda, S3, DynamoDB, CloudFormation | M365, Teams, SharePoint, Fabric, Power Platform, Entra |

What We Actually Learned Deploying Both

When AgentCore Wins

If your organization is AWS-native, the case is almost self-writing. Your IAM policies, VPC topology, CloudFormation stacks, and existing Lambda tools all become first-class citizens without translation layers. The Gateway's dual auth model (ingress + egress in one managed surface) saved us significant time compared to rolling per-service credential injection manually. The two-command deployment for MCP servers (configure + launch) is genuinely that simple for Python-based servers.

For multi-framework, cross-cloud agent interoperability — where you might have a Google ADK agent calling a Strands agent calling a Lambda tool — AgentCore's framework-agnostic stance and production-grade A2A authentication is the right infrastructure layer. You're not forced to choose one orchestration framework.

The stateful MCP client (released late 2025) and managed memory make long-horizon agentic tasks tractable without building your own persistence infrastructure.

Watch out for: Several components were still in regional preview as of Q1 2026. If you're outside us-east-1/us-west-2, verify regional availability before committing architecture decisions. The platform also rewards AWS familiarity — if your team doesn't have IAM fluency, the learning curve is real.

When AI Foundry Wins

If your organization is Microsoft-native — running Teams, SharePoint, M365, Dynamics, Power Platform — the integration gravity is difficult to ignore. Agents built in Foundry can surface as extensions inside Teams, trigger Logic Apps, read SharePoint document libraries with Entra-respecting permissions, and connect to Fabric data agents. The deployment path for that story is genuinely shorter than any cross-cloud equivalent.

The visual multi-agent workflow builder in the new Foundry portal lowers the floor for solutions architects and senior developers who need to reason about and iterate on complex agent topologies without writing orchestration code first. The 1,400+ connector catalog in the Tools tab means "how do we connect to $SaaSPlatform" is often a configuration question, not an engineering one.

For MCP server deployments, the Azure Functions approach has a key advantage: if you already have Functions in your Azure footprint, the MCP extension is a deployment-model addition, not a new service to learn and operate. The self-hosted option is a good fit for teams with existing MCP SDK server code they want to lift into Azure without rewriting.

Watch out for: As of March 31, 2026, deploying agents and hosting them as scalable, independently addressable runtime services (the way AgentCore Runtime works) in Foundry is still maturing. For sophisticated multi-agent deployments where you need fine-grained runtime control, VPC isolation, or framework flexibility, AKS with the Agent Service SDK is still the recommended path — Foundry's hosted agent story is landing but isn't yet as operationally mature as AgentCore's. The A2A tool is also still Preview, so production A2A workflows carry that caveat.

The Honest Bottom Line

These two platforms are converging in capability but diverging in philosophy — and that divergence is likely deliberate and durable. AWS thinks about agentic workloads the way it thinks about every cloud primitive: composable, scalable, ops-instrumented, developer-assembled. Microsoft thinks about them the way it thinks about everything in its productivity stack: wizard-configured, ecosystem-embedded, experientially cohesive.

For organizations with multi-cloud or cloud-agnostic mandates, the technical quality of both platforms is high enough that the selection criteria should be where your existing operational investment lives — IAM + VPC vs. Entra + M365 — not which platform's feature checklist is longer.

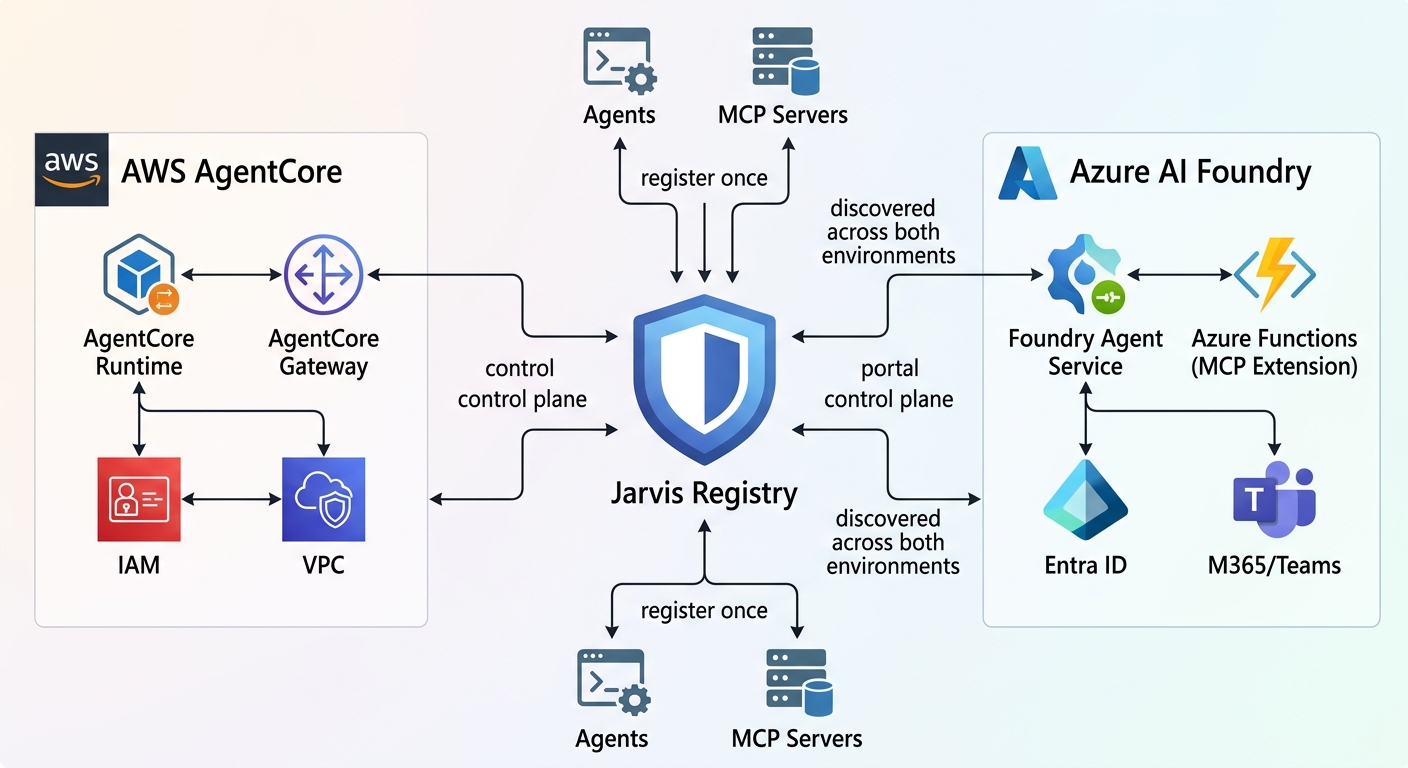

That said, if your organization genuinely operates across both clouds — or wants to avoid the lock-in entirely — the right answer isn't "pick one and squint." It's a federation layer. We've been building exactly that: Jarvis Registry is our open-source agent and MCP server registry that federates across Azure AI Foundry and AWS AgentCore. Rather than duplicating agent registrations and tool catalogs per cloud, Jarvis Registry acts as the vendor-neutral control plane — your agents and MCP servers register once and are discoverable across both ecosystems. If your architecture mandate is multi-cloud, this is the pattern we'd recommend before making irreversible bets on either platform's native registry or gateway.

Both platforms were iterating on roughly monthly release cadences through Q1 2026. If a specific feature comparison here doesn't match what you're seeing in the console, you're probably reading this three months after the last meaningful release.

Want to go deeper on specific sub-topics? Authentication patterns (OAuth/Entra for MCP servers), A2A protocol design across both platforms, the Strands vs. Semantic Kernel + AutoGen framework comparison, or how Jarvis Registry handles cross-cloud agent federation are all worth standalone posts.