The Problem

Enterprise AI adoption is accelerating, but governance is lagging dangerously behind. Teams across the organization are spinning up AI tools — sometimes with full IT awareness, sometimes without — and each new connection to an external AI model represents a potential data exposure, compliance gap, or audit nightmare. Security and legal teams are raising flags, but slowing down AI adoption isn't a viable answer either. The pressure is real and it's coming from both directions.

The core challenge isn't access to AI — it's governed access. Most enterprises have no systematic way to control what data flows into AI models, which employees can use which tools, or what outputs are being generated and logged. When a sensitive customer record or proprietary business strategy ends up in a public AI model's prompt, the damage is often discovered far too late. Without guardrails baked into the workflow, every AI interaction is a calculated risk.

Compounding the problem is fragmentation. Organizations are typically running multiple AI platforms — OpenAI, Anthropic, Google AI, Amazon Bedrock — each with its own access controls, usage policies, and billing models. Monitoring usage, enforcing consistent policy, and maintaining audit trails across that landscape is nearly impossible without a unifying governance layer. The result: shadow AI, inconsistent compliance posture, and no clear visibility into how AI is actually being used across the enterprise.

The Solution

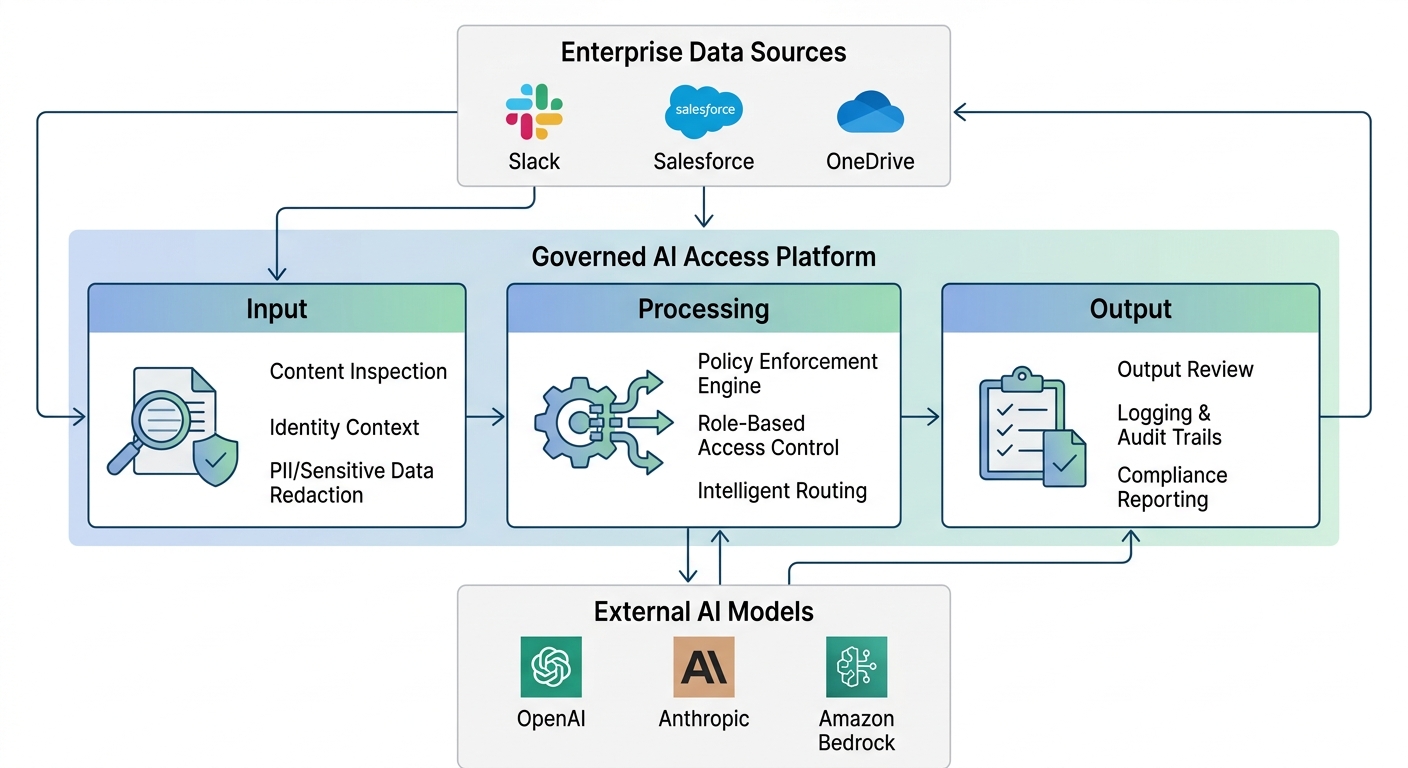

A governed AI access platform sits between your enterprise systems and the AI models your teams use — acting as a secure, policy-enforcing intermediary. Rather than allowing direct, unmediated connections to AI providers, all traffic flows through a centralized gateway that applies your organization's rules before anything enters or exits.

The architecture follows a straightforward three-stage flow:

Input — Data originates from your existing enterprise tools (Slack, Confluence, Salesforce, OneDrive, etc.) and enters the governance layer. At this stage, content is inspected, sensitive data is flagged or redacted based on policy, and identity context is applied to determine what the requesting user or system is permitted to do.

Processing — The governed gateway routes the request to the appropriate AI model — applying your defined policies, enforcing data boundaries, and ensuring that only permissible information reaches the external provider. This is where role-based access controls and custom guardrails do their work.

Output — Results are returned through the same governed layer, reviewed against output policies, and delivered to the end user or downstream system in a controlled, compliant manner. Every interaction is logged for audit and reporting purposes.

The net result is a unified governance fabric: one place to set policy, one place to monitor usage, and one consistent enforcement point regardless of which AI model is being accessed.

ROI & Business Value

| Outcome | Business Impact |

|---|---|

| Reduced data exposure risk | Sensitive PII, IP, and regulated data stays within defined boundaries — reducing breach risk and regulatory liability |

| Consistent compliance posture | Audit trails and policy enforcement apply uniformly across all AI interactions and all users |

| Multi-model flexibility without chaos | Teams can access best-in-class AI tools without IT losing control or visibility |

| Faster enterprise AI adoption | Security and legal teams can say yes to AI because guardrails are built into the process |

| Centralized visibility | Leadership gets a clear picture of AI usage, costs, and risk exposure across the organization |

| Reduced shadow AI | Employees route through governed channels because the experience is seamless — not because they're forced |

Practical Implementation Guide

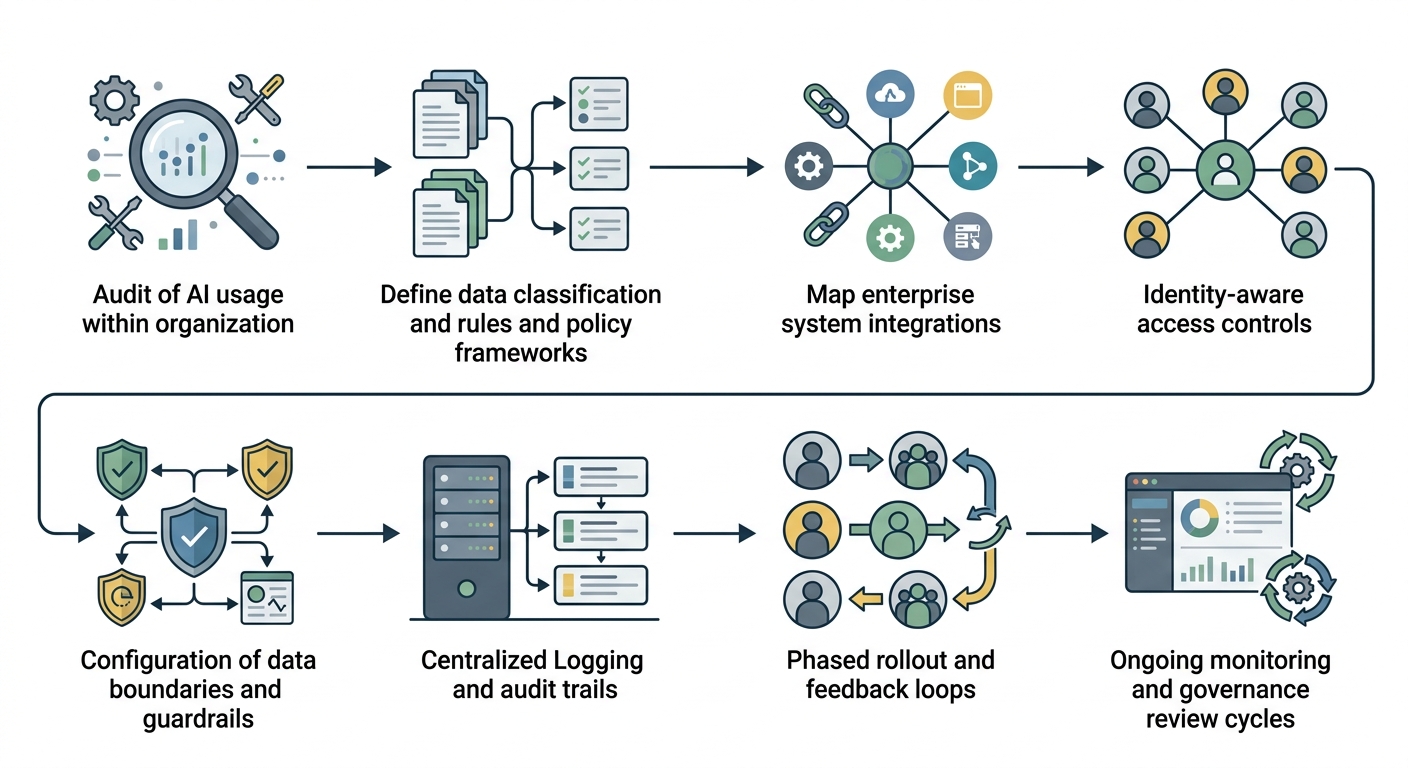

1. Audit your current AI usage Before building governance, understand what's already happening. Identify which AI tools are in use, by whom, and whether any involve sensitive data. This baseline reveals your actual risk surface.

2. Define your data classification and policy framework Work with legal, security, and compliance stakeholders to categorize your data (public, internal, confidential, regulated) and define corresponding access rules. Which data categories can reach which AI models? Under what conditions?

3. Map your enterprise system integrations Identify the tools your teams use daily — collaboration platforms, CRMs, document stores — and determine which ones will connect to AI. These integration points are where governance must be applied at the source.

4. Implement identity-aware access controls Connect your AI governance layer to your existing enterprise identity provider (SSO, SAML, OAuth). Governance policies should be tied to roles and user attributes, not managed separately.

5. Configure guardrails and data boundaries Set up automated detection and handling for sensitive content — PII, financial data, protected health information, proprietary IP. Define what gets redacted, blocked, or flagged before it reaches an AI model.

6. Enable centralized logging and audit trails Ensure every AI interaction — input, model used, output, user identity, timestamp — is captured in a tamper-evident log. This is your compliance record and your operational intelligence source.

7. Roll out in phases with feedback loops Start with a pilot team or department. Validate that policies work as intended, collect user feedback on friction points, and refine before broader rollout. Governance that creates too much friction will drive shadow AI behavior.

8. Establish ongoing monitoring and governance reviews AI models, use cases, and regulations evolve. Build a cadence for reviewing your governance policies, analyzing usage reports, and adjusting guardrails as your AI strategy matures.