The Problem

Every enterprise leader knows AI is no longer optional — it's a competitive imperative. Yet despite the urgency, most organizations find themselves stuck in an exhausting cycle: running pilots that never graduate to production, watching AI initiatives stall in procurement and compliance reviews, or discovering that building a governed, secure AI platform in-house requires a team of specialists they simply don't have. The gap between "we need AI" and "we have AI working in production" is wider — and more expensive — than most expect.

The talent problem compounds the challenge. Deploying large language models (LLMs) at scale requires expertise across machine learning, cloud infrastructure, security, and compliance simultaneously. Even companies that can afford to hire are looking at a 12–18 month runway before seeing meaningful output. In a market where competitors are already automating workflows and accelerating decisions with AI, that timeline is a serious liability.

Beneath the technical complexity lies a quieter, more corrosive risk: the ungoverned AI problem. Employees are already using consumer AI tools — ChatGPT, Claude, and others — to handle sensitive business data, without IT oversight, without data guardrails, and without any audit trail. The organization isn't avoiding AI; it's just losing control of it. That's not a safer position. It's actually the most dangerous one.

The Solution

Governed enterprise AI adoption is about putting structure around something employees are already doing — and making it work better and safer for the organization. The approach rests on three architectural pillars.

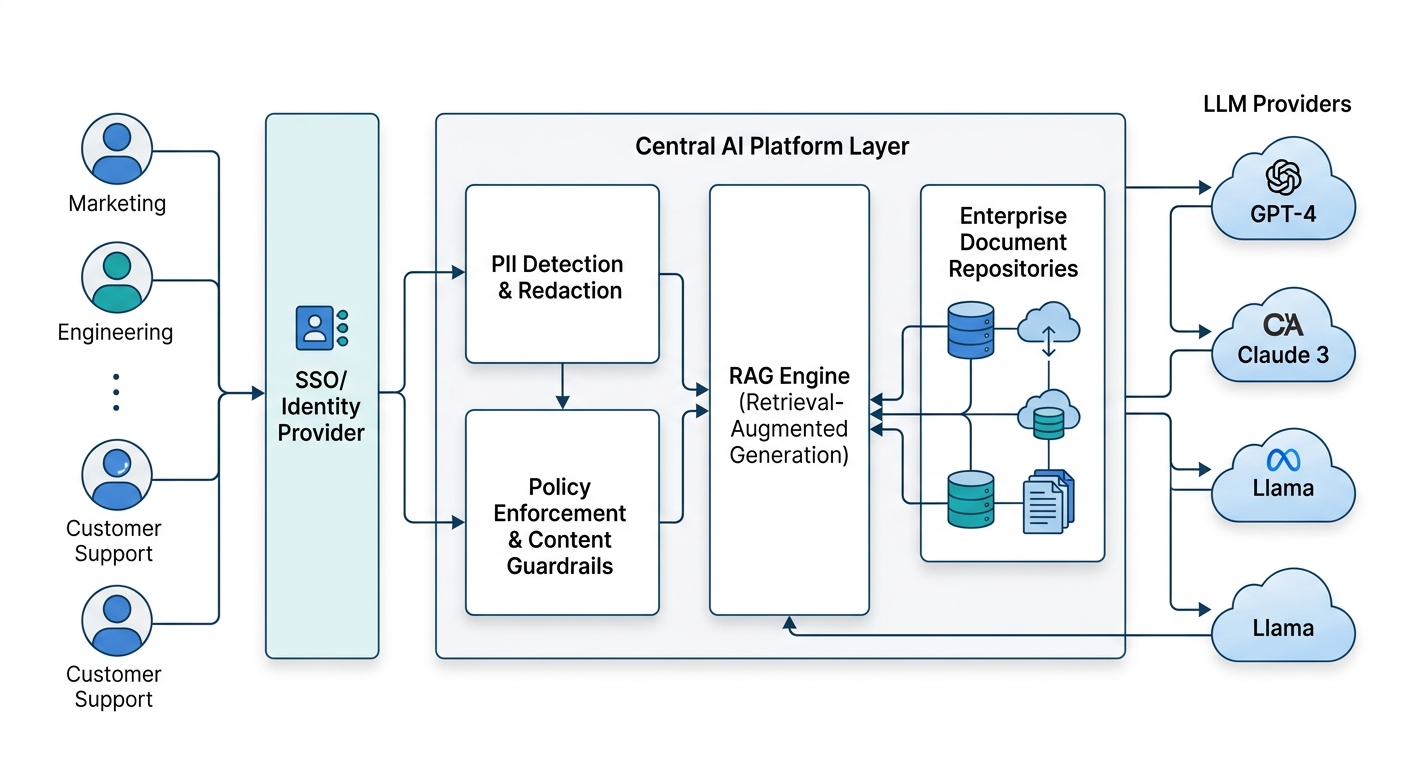

Centralized LLM Access with Guardrails. Rather than each team independently connecting to various AI models, a governed platform provides a single, controlled interface to multiple LLMs. This means the organization retains authority over which models are used, what data is passed to them, and under what conditions. PII detection, content filtering, and data residency requirements can be enforced consistently at the platform layer — not bolted on as an afterthought.

Role-Based Access and Identity Integration. Enterprise AI should extend your existing identity and access management posture, not bypass it. By integrating with enterprise identity providers (SSO, SAML, OAuth), organizations can ensure that the right people have access to the right AI capabilities — and nothing more. Sensitive knowledge bases, specialized agents, and high-privilege automations remain appropriately restricted, and every interaction is logged for accountability.

An Enterprise Knowledgebase with RAG. Retrieval-Augmented Generation (RAG) is the technique that transforms a generic LLM into an expert on your business. By connecting the AI to curated, organization-specific content — internal documentation, policies, SOPs, product knowledge — employees get accurate, contextual answers grounded in company data rather than hallucinated generalities. This is the architecture that makes AI genuinely useful for support agents, analysts, and operations teams.

ROI & Business Value

| Outcome | What It Means in Practice |

|---|---|

| Faster time-to-value | Production-grade AI deployments in weeks, not quarters |

| Reduced labor costs | Routine tasks — summarization, intake, Q&A — handled by AI agents around the clock |

| Lower compliance risk | Centralized policy enforcement eliminates shadow AI exposure |

| No specialized AI staff required | Teams adopt AI without needing ML engineers or data scientists on staff |

| Scalable automation | Agent-to-agent workflows tackle complex, multi-step processes beyond simple chatbots |

| Consistent employee experience | One governed interface across departments reduces training overhead and fragmentation |

| Audit-ready operations | Full usage monitoring and logging supports regulatory and internal review requirements |

The productivity gains are real across a broad range of functions: HR using AI for onboarding FAQs, legal teams analyzing contracts, finance automating invoice review, and customer support handling first-line queries — all through governed, traceable AI.

Practical Implementation Guide

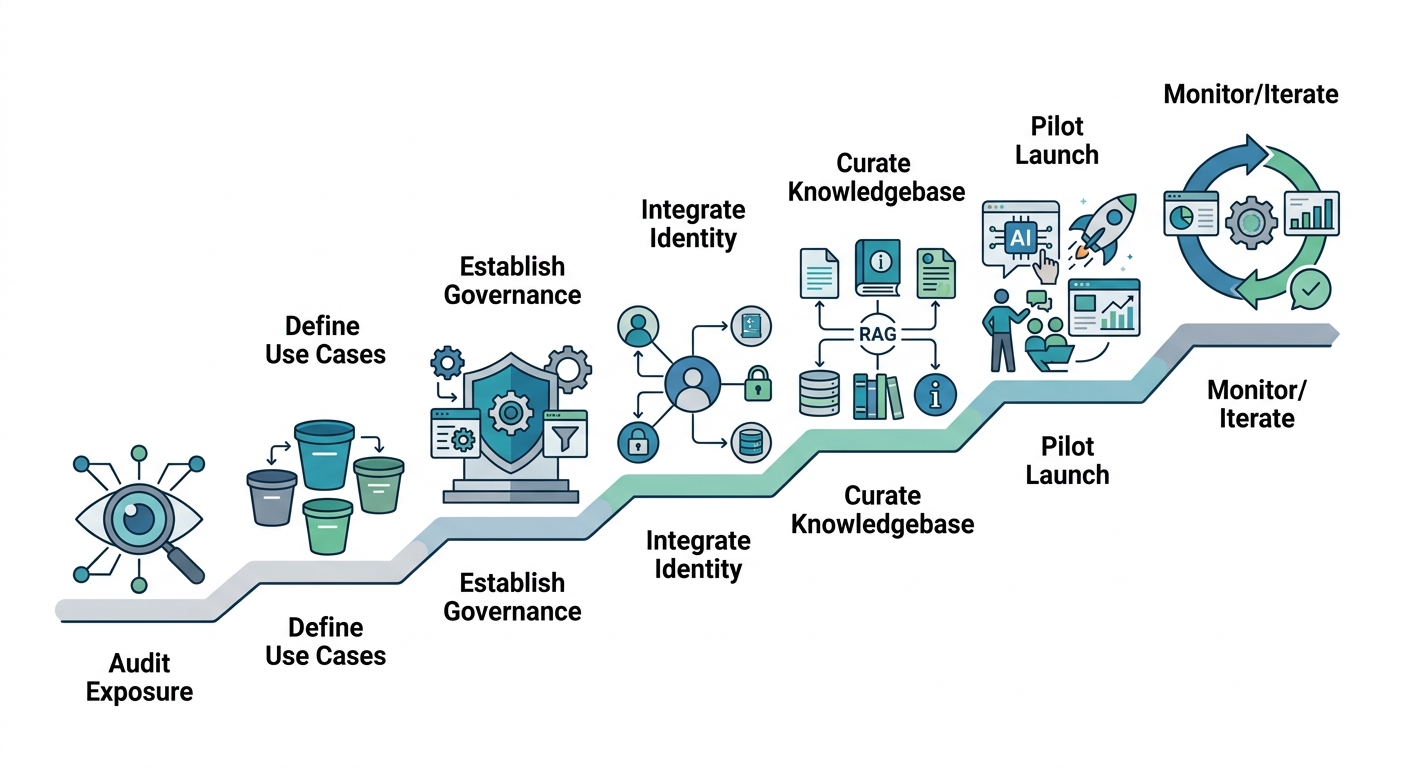

1. Audit Your Current AI Exposure Before deploying anything, map where AI is already being used in your organization — sanctioned or not. This gives you a baseline for governance gaps and a prioritized list of use cases to address first.

2. Define Your Use Case Tier List Classify potential AI applications by impact and complexity. High-impact, lower-complexity use cases (document summarization, Q&A, content generation) should be your launch targets. Save agentic automation for phase two once you have a governance baseline in place.

3. Establish Your Governance Framework First Decide your data handling policies, acceptable LLM providers, PII sensitivity rules, and access tiers before deploying to end users. Retrofitting governance onto a live system is far more costly than building it in from the start.

4. Integrate with Existing Identity Infrastructure Connect your AI platform to your existing identity provider (Okta, Azure AD, etc.). This ensures access control is managed through the same systems your IT and security teams already operate — not through a parallel, disconnected permission model.

5. Build and Curate Your Enterprise Knowledgebase Identify the documents, policies, and knowledge sources that would make AI most useful for your priority use cases. Clean, well-structured content produces dramatically better RAG results. Start with a focused corpus and expand iteratively.

6. Pilot with a Contained, Enthusiastic Team Launch with a team that has a clear use case and a tolerance for iteration. Capture feedback rigorously. This cohort becomes your internal advocates and provides the evidence base for broader rollout.

7. Monitor, Measure, and Iterate

Establish usage dashboards from day one. Track adoption rates, query patterns, and error cases. AI deployments improve rapidly with feedback loops — but only if you're measuring the right things from the start.