The Problem

Every week, another executive team greenlights a GenAI initiative — and within months, many of those same teams are quietly asking where the budget went. The models are expensive to run, the use cases weren't quite right, and nobody agreed on what "success" looked like before the first line of code was written. This is not a technology failure. It's a planning failure.

The core issue is that GenAI adoption often starts in the wrong place. Teams get excited about a model, spin up a pilot, and only later discover that the workflow they automated wasn't the one generating business value — or that a less expensive model would have achieved the same result at a fraction of the cost. Without a structured starting point, companies are essentially making multi-six-figure bets on intuition.

For organizations migrating away from an existing GenAI solution, the challenge compounds. Switching costs, retraining overhead, and data migration complexity can quietly dwarf the original implementation expense. Whether you're brand new to GenAI or looking to evolve a deployment that isn't delivering, the absence of a rigorous upfront assessment is the single biggest driver of wasted investment in the space.

The Solution

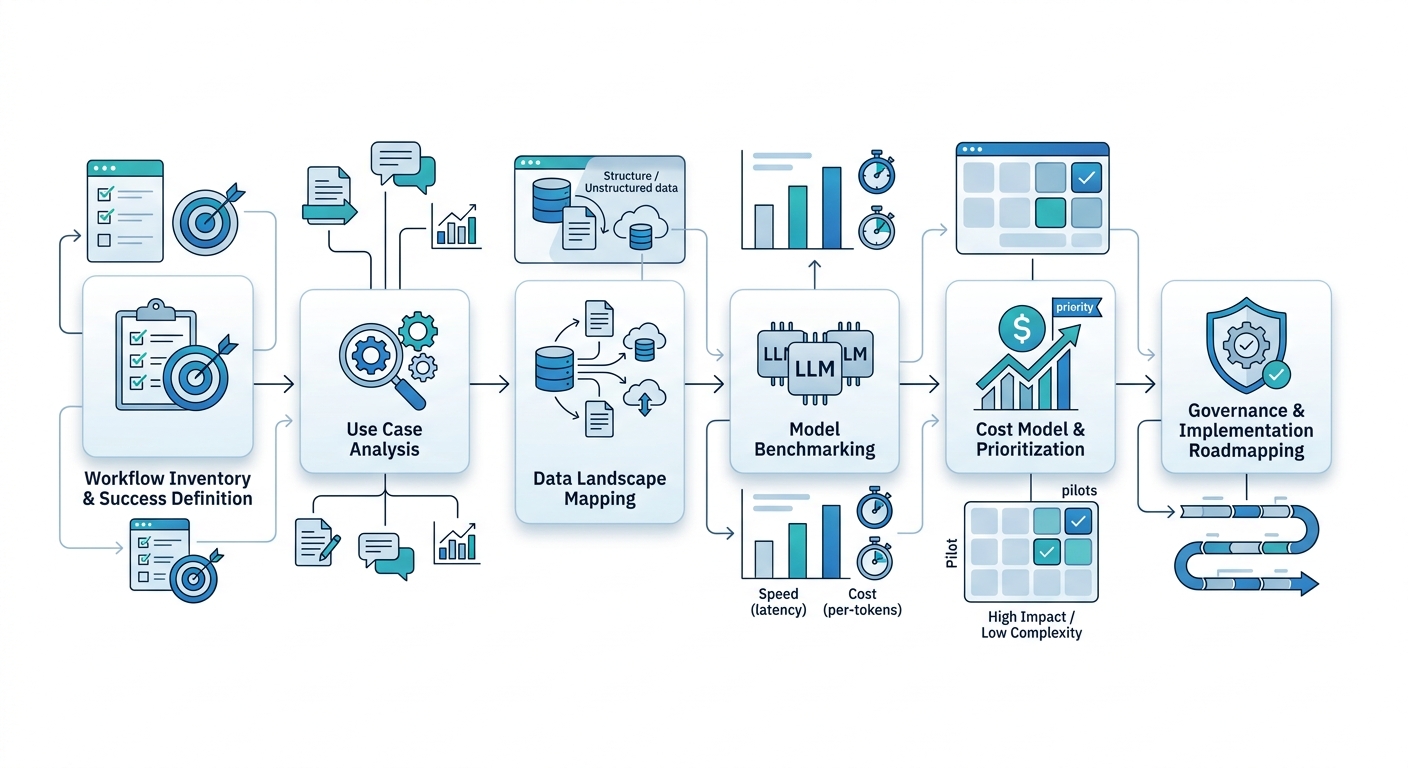

A GenAI assessment is a structured, diagnostic process that answers three questions before any major commitment is made: Where should we apply GenAI? Which model is right for our needs? And what will it actually cost?

Use Case Analysis maps your existing systems, workflows, and data assets against the landscape of high-impact GenAI applications — enterprise knowledge bases, document intelligence, data analytics automation, customer-facing chat, and more. The goal is to surface the two or three use cases with the highest return potential relative to implementation complexity, not just the ones that sound impressive in a board deck.

Model Evaluation benchmarks leading large language models against your specific tasks. Performance, latency, cost-per-token, and scalability all vary dramatically across models. What works well for open-ended creative tasks may perform poorly — and cost far more — on structured enterprise Q&A. Objective benchmarking removes vendor bias from the equation and gives your team real data to act on.

Cost Estimation translates the technical findings into business language: projected implementation costs, ongoing inference and maintenance expenses, and realistic timelines. Knowing the numbers before the decision is made is the difference between a strategic investment and a budget surprise.

ROI & Business Value

A well-executed GenAI assessment pays for itself before deployment begins. Here's why:

| Value Driver | Business Outcome |

|---|---|

| Prioritized use cases | Resources focused on highest-ROI applications, not loudest requests |

| Objective model selection | Right-sized model choice reduces inference cost and avoids over-engineering |

| Transparent cost projections | Finance and leadership alignment before commitment, not after |

| Workflow fit analysis | Avoids automating processes that don't need automation |

| Migration readiness | Clear path for teams moving off underperforming GenAI deployments |

| Faster time to value | Teams start building the right thing on day one |

The organizations that see the strongest GenAI returns aren't necessarily the ones who moved fastest — they're the ones who aimed carefully before they moved.

Practical Implementation Guide

Any team can run a meaningful GenAI assessment by following these steps:

Inventory your workflows. Document the 10–15 processes in your organization that are most repetitive, knowledge-intensive, or bottlenecked by human availability. These are your candidate use cases.

Define success criteria upfront. For each candidate use case, specify what "good" looks like — accuracy thresholds, response time, cost per query, or reduction in manual effort. Vague goals produce vague results.

Map your data landscape. GenAI is only as useful as the data it can access. Identify where relevant documents, databases, and knowledge sources live, and flag any data governance or PII concerns early.

Benchmark at least two to three models. Run representative tasks through multiple models with realistic prompts and data volumes. Evaluate output quality, latency, and cost side by side — not on vendor demos, but on your actual workloads.

Build a cost model, not just a budget line. Estimate implementation cost, monthly inference cost at expected usage, and ongoing maintenance. Include the cost of human oversight and quality assurance.

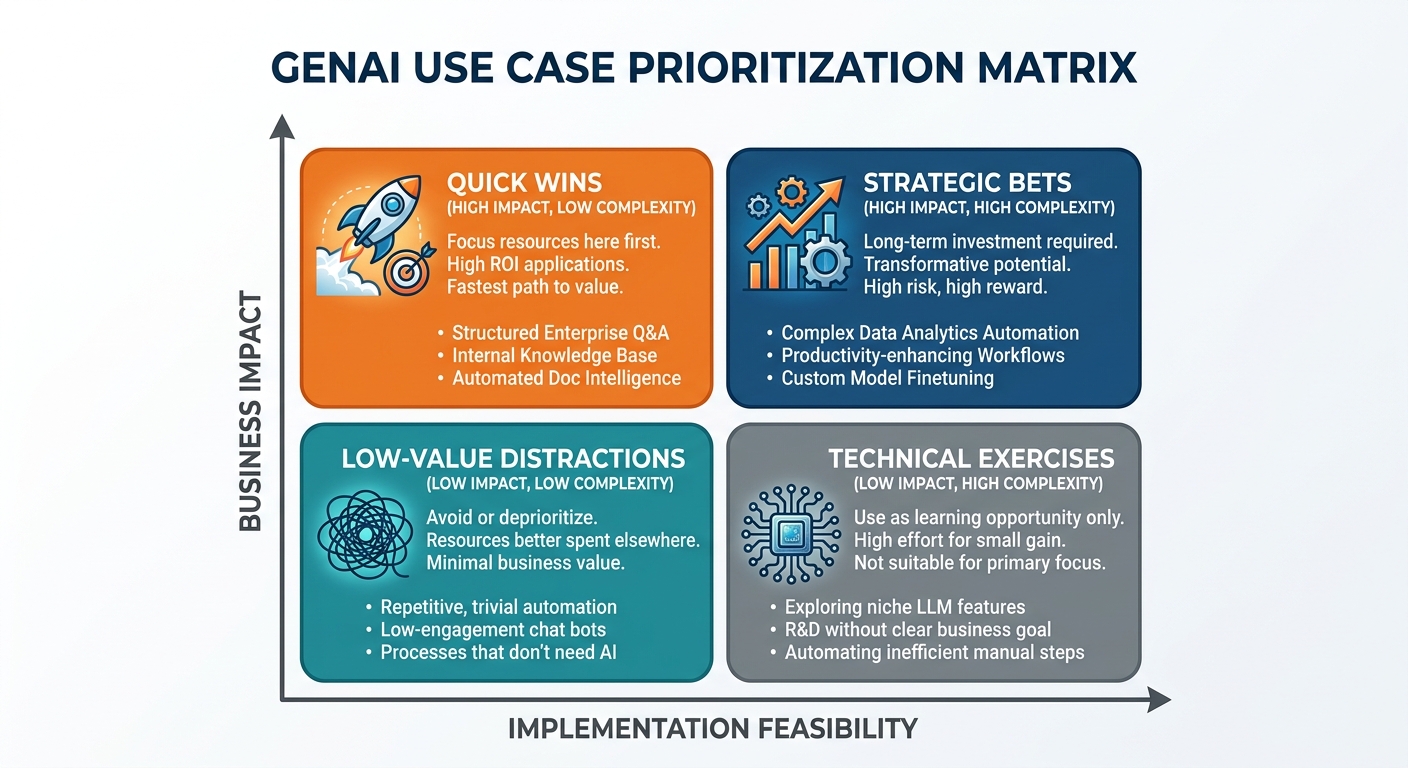

Prioritize ruthlessly. Score each use case on business impact, technical feasibility, and time-to-value. Pick one or two to pilot first. Resist the temptation to boil the ocean.

A 2x2 matrix plotting use cases on axes of 'Business Impact' and 'Implementation Feasibility' to identify high-ROI 'Quick Wins' versus low-value distractions. Document the governance requirements. Before any production deployment, identify who owns the AI outputs, how errors are handled, and what compliance or audit requirements apply to your industry.